Datastores in any typical virtualized environment are critical components that hold virtual disks, snapshots, and other data that keeps your VMs alive. Like any storage, over time datastores tend to fill up, and when the percentage of used space reaches worrying levels it’s time to look for stuff that can be thrown away in order to clear the mess before a storage crisis unfolds.

The best candidates for immediate deletion are files that occupy significant space and are not actually used, or, as it may be in some cases, are not even connected to any VMs. So this is my mission: Find all files on a VMware Datastore, see how long they have not been changed for and then determine if they are still useful or can be deleted safely.

To accomplish the task, I will be using the VMware PowerShell module (AKA PowerCLI). As you will see, using PowerShell has its advantages (such as the potential for automating the process of searching the datastores, and being able to perform simple root-cause analysis for disk space shortage without third-party software), as well as several drawbacks and caveats.

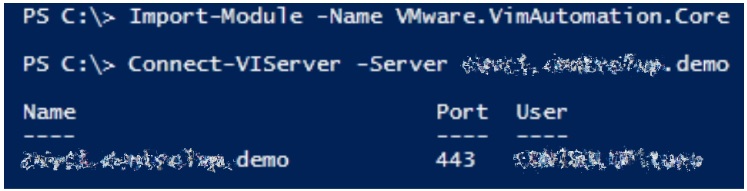

Let’s start with initializing our PowerShell session and establishing a connection to the vCenter server:

The first thing I need to do is to gain a view of the storage from the vSphere perspective. Of course, there may be ways of gaining direct access to the storage array. However, for the task at hand, it is important that I see the same picture that my virtualization infrastructure sees.

Datastores as PSDrives

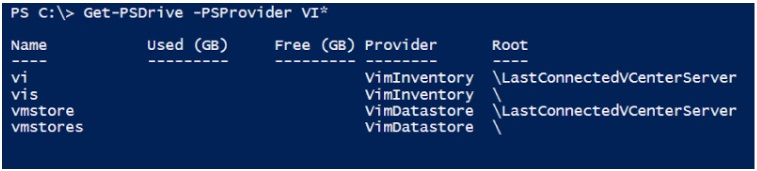

The first technique I will discuss is leveraging the fact that PowerCLI exposes PSDrives for the available file datastores. This enables us to look at the virtualization datastores as if they were regular disk drives. Sounds promising, so let’s give it a try:

There are VimInventory drives (which as the name suggests are an inventory of the configurations), and VimDatastores. Vmstores contains all the known datastores, Vmstores are the ones available on the VCenter server you are currently connected to. That’s the one we want, and we are working with our datacenter that’s conveniently called Datacenter and datastore DS01. It’s a drive, so Get-ChildItem should work:

Get-Childitem -Path vmstore:DatacenterDS-NFS-00

LESSON LEARNED: I live in a Microsoft world where we all happily frolic in a field of files that don’t care if you use the correct capitalization when you call them. Not so in the harsh mountains of Linux, make sure you get the capitalization correct. For example, in my case with the datastore named “DS-NFS-00”, the following caused an error:

Now, when leveraging the -Recurse parameter, I should be able to traverse the entire datastore:

Get-Childitem -Path vmstore:DatacenterDS-NFS-00 -Recurse -Force

And you get plenty of results. But it takes a long time.

In my case, DS01 has about 27000 files, enumerating which using Get-ChildItem took an hour… And in an enterprise environment, 27000 files is not that many. Get-ChildItem is notoriously slow but this was ridiculous. There has to be a faster way!

LESSON LEARNED: If you get the spelling of a folder in the ROOT of your Windows drive wrong AND do not end your path with a backslash , e.g.

Get-ChildItem c:tamp

instead of

Get-ChildItem c:temp

Get-Childitem apparently decides to search your entire root drive… Fun if you use -Recurse. Even better with -Force

SearchDatastoreSubFolders method

Enter the the VMware SearchDatastoreSubFolders method, credits to @LucD22 who has some great information on finding orphaned files on his website: http://www.lucd.info/2016/09/13/orphaned-files-revisited/

LucD’s script is very comprehensive, but because the end game will use a slightly different approach I just wanted to get the files first and see what I get. SearchDatastoreSubFolders is a bit more complicated but gives you lots of options. A simple search is set up like this:

[cc lang=”cpp”] # Set View $DatastoreView = Get-View $Datastore.id # Create new search specification for SearchDatastoreSubFolders method $SearchSpecification = New-Object VMware.Vim.HostDatastoreBrowserSearchSpec # Create the file query flags to return Size, type and last modified $fileQueryFlags = New-Object VMware.Vim.FileQueryFlags $fileQueryFlags.FileSize = $true $fileQueryFlags.FileType = $true $fileQueryFlags.Modification = $true # Set the flags on the search specification $SearchSpecification.Details = $fileQueryFlags # Set the browser $DatastoreBrowser = Get-VMWareView $DataStoreView.browser # Set root path to search from $RootPath = (“[” + $Datastore.Name + “]”) # Do the search $SearchResult = $DatastoreBrowser.SearchDatastoreSubFolders($RootPath, $SearchSpecification) [/cc]

Nice, this took half the time used by Get-ChildItem. Still very slow though, and because it was sometimes going just over the 30 minute mark I was getting timeout errors. There is a default timeout setting of 30 minutes for remote commands on VMware vCenter. I have to assume some of our users have the same default setting.

LESSON LEARNED: If we are forced to deal vCenter-targeted operations that are expected to last longer than 30 minutes, the following line is needed in order to override the default timeout (in my case, setting it to 3 hours or 10800 seconds):

Set-PowerCLIConfiguration -Scope Session -WebOperationTimeoutSeconds 10800 -Confirm:$false

It just happened to be the case that our infrastructure was undergoing a migration between two storage systems, and for this purpose some storage connections were duplicated for the course of the migration. So, a weird complication occurred – when invoking Get-Datastore by name, I got two results because at the moment two datastores by this name were available.

LESSON LEARNED: Even though this is an unlikely condition, when connecting to a datastore by name, like so:

$Datastore = Get-Datastore -Name $DatastoreName -Server $VCenter

it may be prudent to ensure you only get one datastore by running something like:

if ($Datastore.count -gt 1) {

# Show a warning that more than one datastore was found

}

Our vCenter has been a bit stressed out lately, so I decided to wait for a planned infrastructure upgrade to try this again on upgraded hardware. When I got back to this a few weeks later I expected the file search to be a bit faster.

To my surprise, search times with both methods were reduced by over 90%! We are not exactly sure why this is so much faster, it’s not like the environment was lousy before the upgrade and everything is 10x as fast now. I asked our VMware guy Ze’ev Eisenberg (@eisenbergz) what happened, this is what he did:

vCenter went from v6.0 (Windows 2008R2 based, separate SQL server) to v6.7U1 (VCSA). The hardware went up a couple of generations, newer faster CPU. ESXi moved from 1Gb/s switch to 10Gb/s switch, although the Filer itself was always at 10Gb/s.

LESSON LEARNED: A vCenter server may present a significant performance bottleneck. If you’re experiencing extreme slowness, don’t hurry with blaming the storage or the virtualization hosts, since the vCenter box may well be the one to blame. Make sure you review the hardware specs in case you’re experiencing slowness, and consider upgrading to the latest virtual appliance. Performance indicators may not be showing you the whole picture here. As I was not seeing any real increase in stress on the VCenter box while running my tests I did not think it could be the actual VCenter, but it looks like it played a big part after all.

Getting all files on a 9TB NFS datastore using the Get-ChildItem method took just under 3 minutes, as reported by Measure-Command:

(Measure-Command {gci $path -Recurse -Force}).TotalMinutes

TotalMinutes : 2.78914866833333

The same operation took only 16 seconds using the SearchDatastoreSubFolders method:

(Measure-Command {$DatastoreBrowser.SearchDatastoreSubFolders($RootPath, $SearchSpecification)}).TotalMinutes

TotalMinutes : 0.273544748333333

But this kept getting lower during the course of my testing? When I tested this the first few times the DatastoreBrowser method took half the time of the Get-Childitem method, by the time these tests were done it was sometimes as low as 4 seconds). But during most of my testing it was a lot slower, more on this later…

Still scratching my head wondering how a system that needs to access files really fast can be relatively slow when trying to get what amounts to a relatively simple directory listing, I also noticed that the Get-ChildItem method returned a significantly lower number of files than DatastoreBrowser.

Get-Childitem: 2568 files

DatastoreBrowser: 4862 files

Why was this happening?

A closer look at the results reveals a few differences between the output of the two methods:

- The DatastoreBrowser method lists all virtual disks in a relatively straightforward manner – one entry for each vmdk file, while the Get-ChildItem method will show multiple entries wherever snapshots are involved. For a disk shown by DatastoreBrowser as a single vmdk, Get-ChildItem may show multiple entries with file names ending with “-flat.vmdk”, “-delta.vmdk”. More on this below, when it’s time to see how much space those files consume…

- The DatastoreBrowser method output will include files like logs and other configuration items (like .vmx, .nvram) which the Get-ChildItem method does not display. While this difference accounts for many files, it is not very material for our cause, for two reasons:

- These files are unlikely to take up a large portion of your storage, it’s mainly virtual disks we’re interested in

- Since those are system files, it is not recommended to delete or otherwise tamper with them

- ISO files are another example of files that will show up in the DatastoreBrowser method output, but not when using Get-ChildItem. Keep this in mind, since ISO files can take up significant disk space.

Get-ChilItem is not the Get-ChildItem we know from Windows. In this case, it works on top of a provider from VMware geared towards their datastore, and as Microsoft states:

“PowerShell providers are Microsoft .NET Framework-based programs that make the data in a specialized data store available in PowerShell so that you can view and manage it.”

Note SPECIALIZED, it is the way VMware wants us to see this drive, not the way we may expect from a ‘dir /s”on a Windows box.

So the DatastoreBrowser method not only retrieved more files, it also did it a lot faster than Get-ChildItem. If it takes less than a minute to scan a few terabytes, I guess we can live with that. However, for larger (and/or slower) datastores you may want to speed up the scan a bit more. Another 9 TB datastore of ours, which is also more heavily utilized, took about 13 minutes to scan, which can be a bit too long to wait.

Let’s take a look at what’s happening under the hood of DatastoreBrowser.

Before the actual search gets launched, the script code shown above has a few lines that sets some options using fileQuery flags. These flags specify which information to retrieve, specifically whether we’re interested in getting the owner, size, type and modification time for each item. Let’s take a look at the effect of changing these flags on the query duration.

Searching for only :

fileOwner: 30 seconds

fileSize: 24 seconds

fileType: 152 seconds

modified: 28 seconds

Combining all flags except fileType:

fileOwner, fileSize and modified: 30 seconds

So it seems that the fileType flag is the one slowing down the entire search. I was thinking too much Windows, that the file type was a simple lookup based on extension, but it is not. vCenter takes the ‘.vmdk configuration file’, actually opens this and then matches it with the -flat, -delta etc files of the same name and comes up with a complete description of the ‘package’. That’s how it comes up with the complete file description, and why it does not show these files, it just shows the whole package. So we are opening a whole lot of small files, something every storage subsystem loves.

It is also important to note that this information does not seem to be registered in the cluster. If you copy and paste a ‘vmdk package’ into the datastore on the actual SAN it will be treated the same way by vCenter. The results will show the whole package information even though vCenter has never imported the disk and is just seeing the .vmdk files now.

What is nice about this, is that when you see a *-flat.vmdk file in the results, you can suspect this is an orphaned file. The disk data file is there but the configuration file is not, so there is no way VMware can be using this file.

When I dropped the fileType information from the query, it ran a lot faster than with the fileType info. And remember the large datastore that took 13 minutes to scan? Dropping the fileType flag from the query specification caused the query time to drop to about 4 and a half minutes.

So much for the quantity of data, now what about the quality? Let’s remember our original cause, which was locating files that are taking up significant storage space.

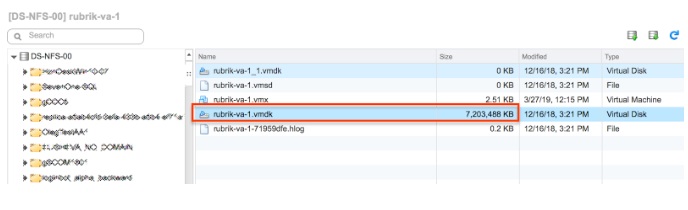

This is the time to mention another key difference between the DatastoreBrowser method and the PSDrive/Get-ChildItem method. It appears that the file objects exposed on our PSDrive will display the size of the entire storage provisioned for the disk, even if it only takes up a small fraction of this storage. This is important for disks configured with thin provisioning, which, when queried with Get-ChildItem will show their entire size. Conversely, when the DatastoreBrowser method is used, the size will correspond to the actual amount of space taken by the thinly provisioned disk, and (which is particularly important for our cause) to the size shown in VMware native tools, as in the following example:

Here’s how the vSphere web client shows the size of one of my disks:

And here’s what I’m seeing when looking at the file object on my PSDrive:

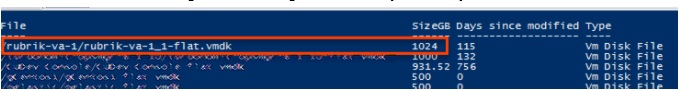

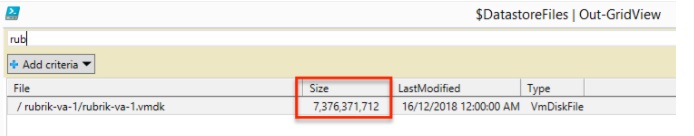

Lastly, here’s what I see in the results obtained using the DatastoreBrowser technique:

(using the lovely Out-GridView window)

As you can see, in the latter example the size in bytes matches the size shown in the vSphere web client.

Now that we know which files are we looking at, and what is their size on disk, we are ready to unleash the DatastoreBrowser on our datastore. Unfortunately, the SearchDatastoreSubFolders method does not give you much flexibility in excluding files based on name, date etc. The only real filter it seems to allow is to return certain file names only. So we have to get all the files and then filter them. We can specify the minimum size of files that we want to look for (remember, we’re looking for the big fish here), and how long ago they were modified:

[cc lang=”cpp”] # Set View $DatastoreView = Get-View $Datastore.id # Minimum file age in days (0 gets all files regardless of age) [int]$intFileNotModifiedDays = 0 # Minimum file size in GB (0 gets all files regardless of size) [int]$intFileSizeMinimumGB = 5 # Get current date [datetime]$dtNow = Get-Date # This variable will control the file minimum age criterion [datetime]$dtBeforeDate = $dtNow.AddDays(-$intFileNotModifiedDays) # Now get the files and filter the results according to the age and size criteria $DatastoreFiles = Foreach ($obj in $SearchResult) { $objEx = $obj | Select-Object -expandproperty File Foreach ($File in $objEx | Where-Object { ($_.Modification.Date -lt $dtBeforeDate) -and (($_.FileSize / 1gb) -gt $intFileSizeMinimumGB) }) { [pscustomobject][ordered]@{ # Strip the datastore name from the File path, for neatness File = “$($obj.Folderpath.Replace(“$RootPath”,’/’))$($File.Path)” Size = $File.FileSize LastModified = $File.Modification.Date # Shorten the file type, for neatness Type = $File.ToString().Replace(‘VMware.Vim.’, ”).TrimEnd(‘Info’) } } [/cc]

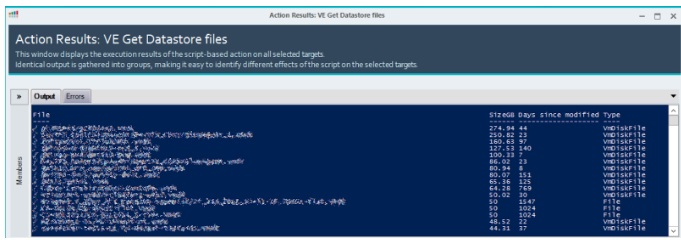

So now we have all the files over a certain size and age on the datastore the way VMware looks at them. A tabular display sorted by size will show the top disk space hogs:

The screenshot above is from the results screen on ControlUp’s Script-based Actions feature, which makes it easy to run the PowerShell script against any datastore of choice (or even multiple ones!).

To delete or not to delete?

So, we have identified a bunch of files that consume a lot of disk space, some of which have been sitting on the datastore unmodified for a long time. However, we still don’t know for certain if a file is orphaned or not. Again @LucD22 has excellent information in his orphaned files article, be sure to check that out. In short: search all the configurations in vCenter for the same files that you found in your search, and filter those out. What is left should all be either files that have nothing to do with VMware, or orphans that should be safe to delete.

Note the word should. There are some edge cases, which may complicate things.

For instance, what if you have multiple ESXi implementations using the same storage but different vCenters? LucD provides a way of searching several vCenters at the same time, which is a good start. But perhaps you are using something like Guy Leech’s cloning script to clone machines (check it out here) and use a ‘master’ set of disks that you clone from which are unknown to vCenter? Or you have made some manual copies of folders for whatever arcane reasons? To quote the immortal Frank Zappa, The Torture Never Stops! It’s always best to double-check before deleting items that you aren’t sure about.

In the case of our storage, cross-referencing the script’s findings with the vCenter inventory enabled us to find some year old -flat files that bore the name of somebody who had left the company months ago, deleting which saved us a few hundred gigabytes. Also, it turns out that we make a lot of backups of disks to the same storage as the source disk. There’s nothing wrong with that, we also keep backups on different storage like we should. This is nice and enables for a fast restore (if, for example, somebody testing a script to clean up the datastore should accidentally delete all the disks). But then if you look closely, you can see that there are certain machines that are used as templates and even though their disks have not changed for over three years they are backed up hourly? That calls for some optimization in the backup procedures, with the potential of preventing some future waste of disk space.

Wrapping up

So what have we learned?

- When querying VMware datastores with PowerShell, the SearchDatastoreSubFolders method is superior to querying the datastores using PSDrives, both in terms of performance and relevancy of results to the mission of finding files that consume significant disk space.

- When using the SearchDatastoreSubFolders method, including the FileType in the query specification may significantly impact the duration of the query.

- The hardware specifications of the vCenter as well as the degree of utilization of the vCenter server at query time are both factors that may affect the time it takes to scan your datastores, so if things are unbearably slow consider upgrading the vCenter or running the query at off-peak hours.

- After locating some candidates for deletion, cross-reference the list of files with vCenter’s inventory to make absolutely sure you are not about to cause any damage. And of course, back up everything that you are going to delete, just in case.

All the lessons learned are implemented in a single PowerShell script, which is now available as a ControlUp Script-based Action. This means that when using ControlUp, you can right-click any datastore and launch the script seamlessly, providing an overview of the major disk space hogs. Using the link above, you can also download the script for standalone use. Please leave a comment below if you found this useful!

Huge thanks to LucD for his excellent work on searching datastores, Ze’ev Eisenberg and Guy Leech for being a fountain of information and testing a lot of things for me, and Eugene Kalayev for helping to keep me focused and halfway sane during this entire process.