VMware has released countless interesting Flings—applications or tools, built by their engineers, that add functionality to or simplify VMware products—over the years, but I have never seen one get as much exposure or generate as much excitement as their latest: ESXi—their bare metal hypervisor—on ARM. It’s generated a lot of buzz on Twitter and people have been blogging about it incessantly ever since it was announced on October 7, 2020 by Kit Colbert, VP & CTO of VMware.

Flings are created by VMware employees for the benefit of VMware customers. VMware uses Flings to gauge the interest and viability of a new technology and determine whether it’s worthy of becoming an official VMware product. Judging by the chatter, there is a lot of interest in this Fling, in particular.

Let’s talk about why this Fling is so exciting, and about the server that I put together to experiment with it, and how I used ControlUp to monitor it.

ARM is an acronym for Advanced RISC Machine and ARM processors are used by most cell phones.

VMware is an enterprise software company and, as near as I can figure, it does not want to have a hypervisor on a cell phone, but ARM processors are also used in servers and on discrete components inside of the datacenter. Many large, public cloud providers use ARM-based servers, as they offer superior performance to power usage ratios. At VMworld this year, VMware announced Project Monterey, which is a SmartNIC where network security and management functions are off-loaded to a NIC with an ARM processor on it.

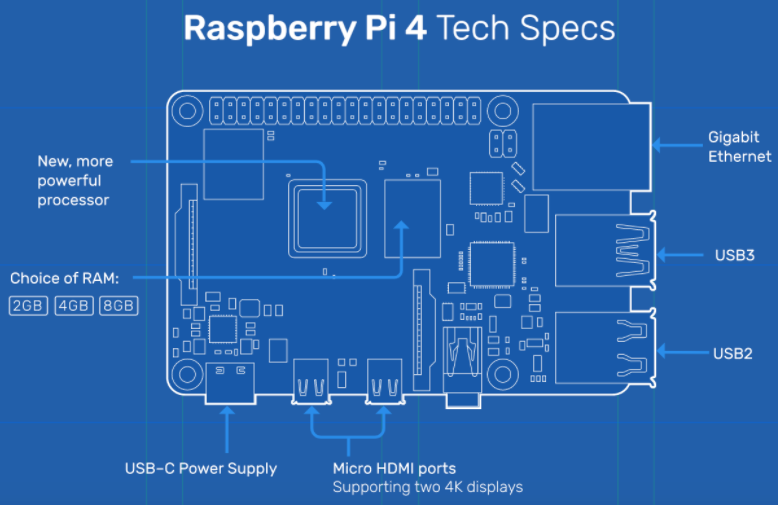

However, the vast majority of people that are downloading and installing ESXi on ARM are running it on a Raspberry Pi 4 B.

The ESXi on ARM Fling site has a list of supported ARM hardware and, under Far Edge devices, they list the Raspberry Pi 4B with 8GB of RAM. This is the device that I, and most others, are using for the simple reason that it costs less than $100.

To use the Raspberry Pi, you’ll need a case, power supply, and storage. I went with an Argon One case, a 256 GB thumb drive, two 128 GB thumb drives, and a 32GB micro SSD card. The cost of the entire kit was slightly under $200.

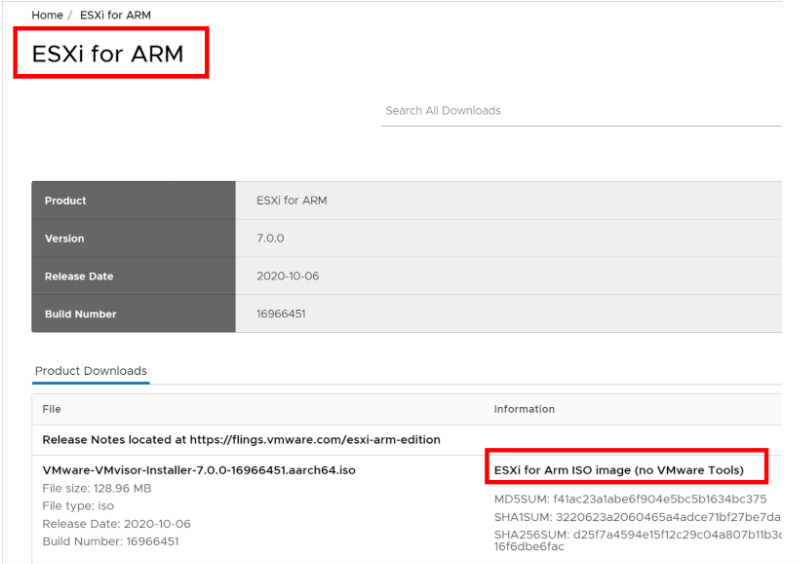

Unlike with other Flings, I needed to download the ESXi on ARM iso image from VMware using my MyVMware account.

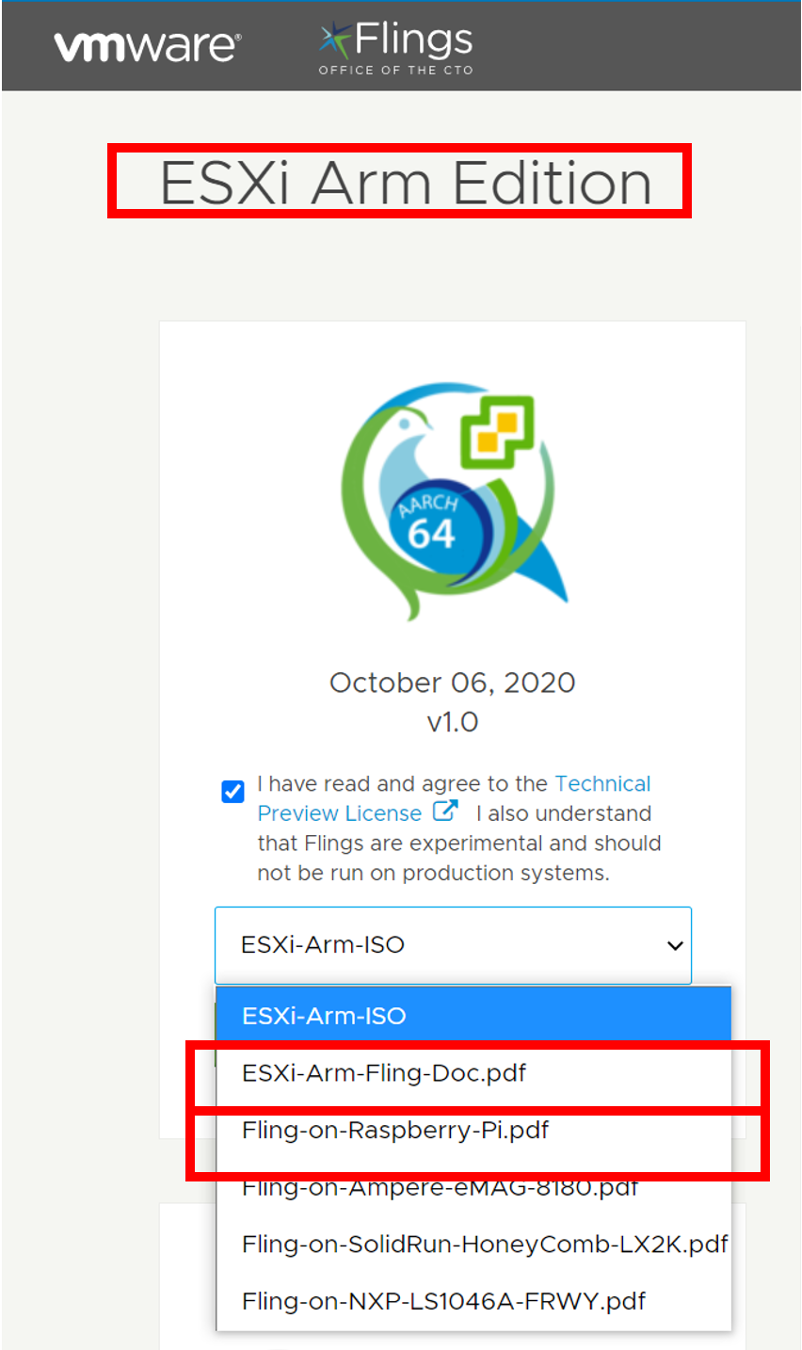

I was able to install ESI on the Pi without any issues by following the instructions in the ESXi-on-ARM-guide and the Fling-on-Raspberry-Pi documents.

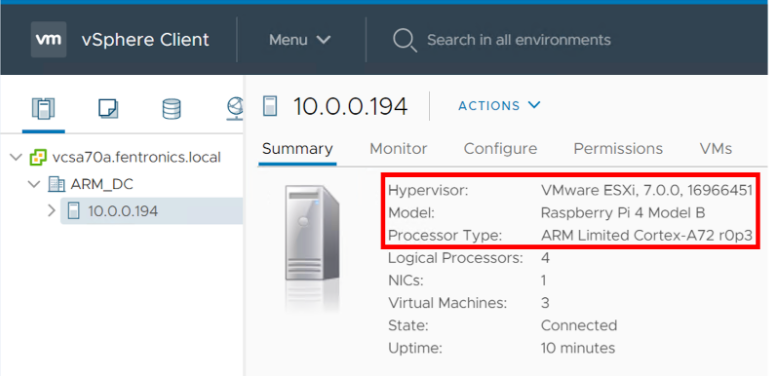

Once I finished installing ESXi, I was able to add it to my vCenter Server 7 instance. vCenter 6.x is not supported and I couldn’t attach the ESXi host to it.

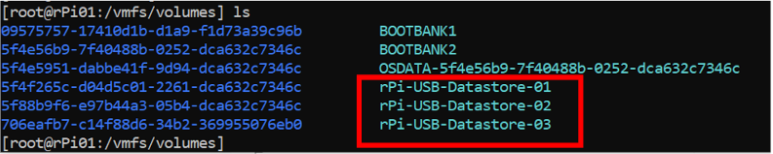

I created VMFS file systems on the three USB thumb drives attached to the Pi from the ESXi command line interface, as this can’t be done from the vSphere client.

I used the vSphere Client to create one Fedora and two Ubuntu virtual machines (VM). I needed to use the ARM architecture installation media for the VMs. ESXi on ARM does not include VMware Tools with it, so it needs to be manually compiled and installed on the VMs that are running on it.

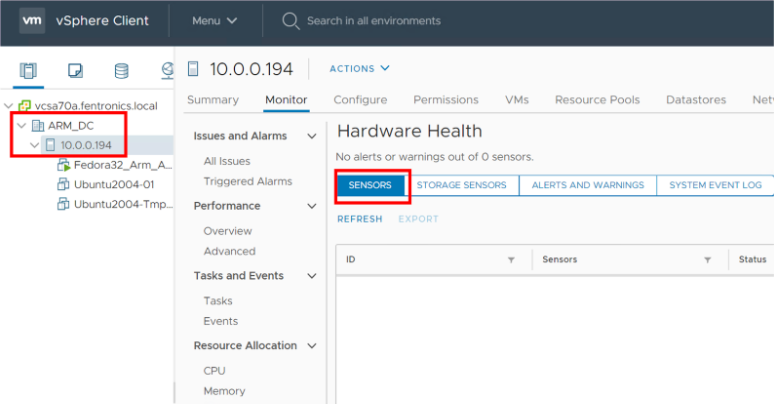

I noticed, unfortunately, that sensor data from the Pi is not reported by the vSphere Client.

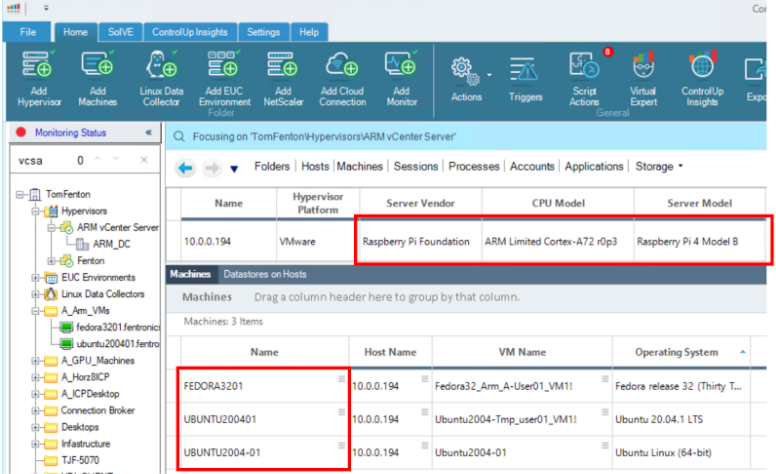

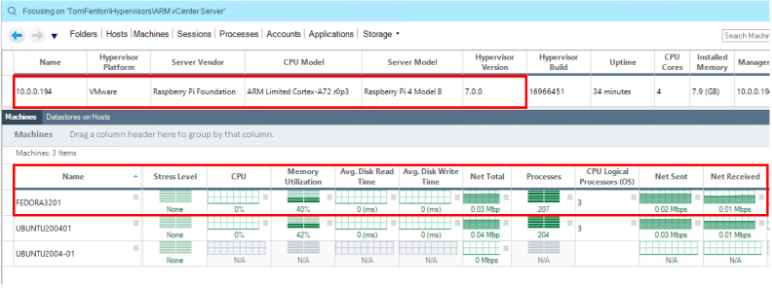

Once I had the Pi running and associated with a vCenter Server, I wanted to verify that it could be monitored with ControlUp. I added the vCenter Server that was associated with my ESXi on ARM server to my ControlUp console without any issues. Within a few seconds, I saw the server and the VMs that were running on it.

Once I installed a Linux Data Collection (LDC) and the ControlUp agent on the VMs, I was able to see metrics from the VMs.

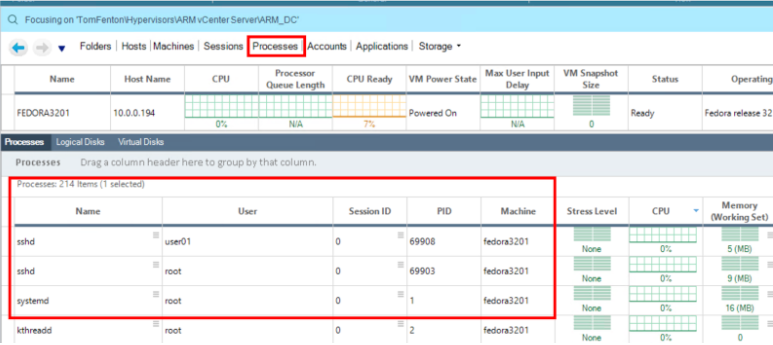

I could see the processes that were running on the VMs.

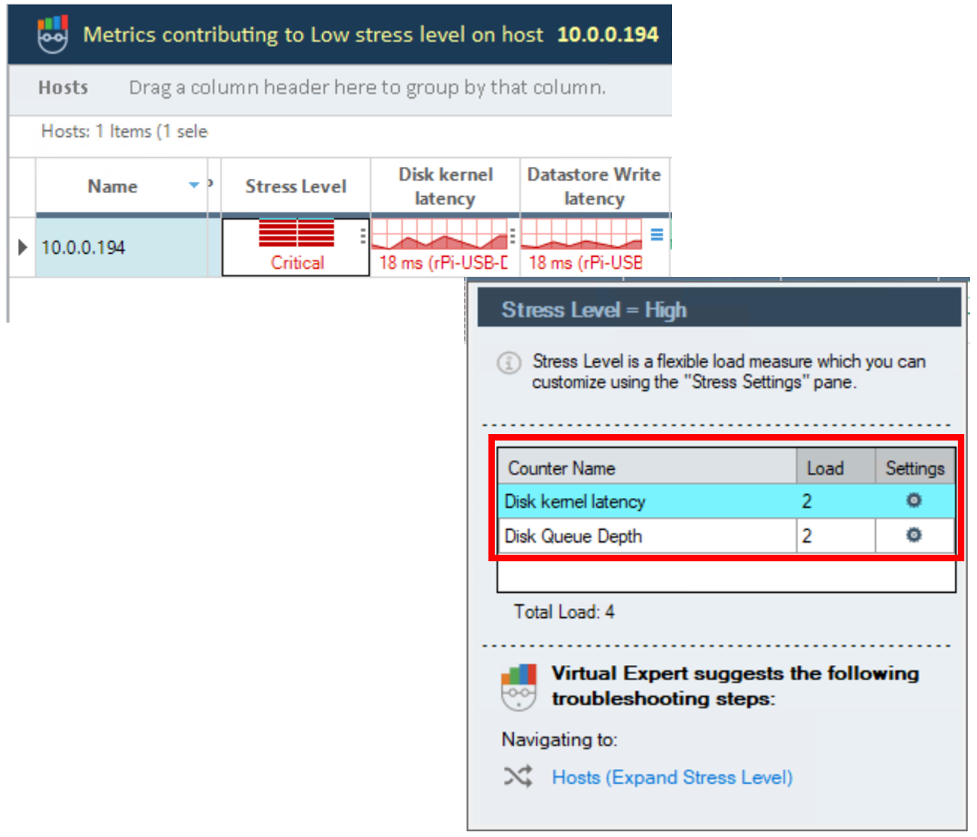

The stress level was red due to disk latency. This didn’t surprise me, as I was using a thumb drive attached via a USB port for storage.

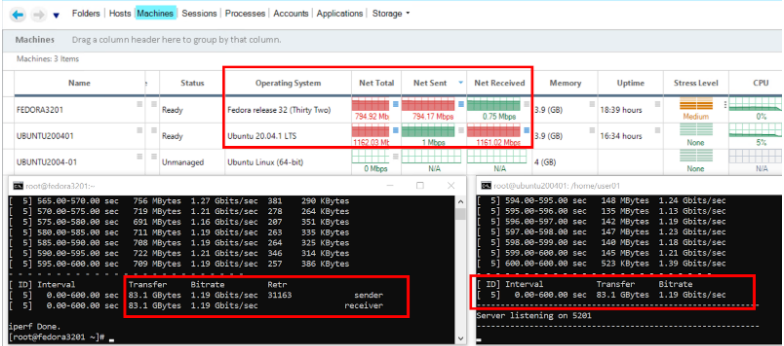

To generate network traffic, I ran iperf3 between an Ubuntu and Fedora VM and monitored it using the ControlUp dashboard.

Other ARM Platforms

Most people working with ESXi on ARM are experimenting with it using a Pi, which can be used in the datacenter as a low-cost witness node for vSAN. VMware also lists some ARM-based servers that ESXi on ARM will run on. A prime example of this is the Lenovo HR350A datacenter server. It has a 32 core ARMv8 64-bit CPU that runs at 3.3 GHz, supports up to 512GB of RAM and SATA and NVMe drives. The CPU that this server uses was developed by Ampere; it supports up to 42 lanes of PCIe 3.0 and eight PCIe 3.0 controllers. This server was designed for applications that need a lot of storage and networking such as machine learning (ML) and artificial intelligence (AI). HR350A systems start at $1,665.

I was able to install ESXi on my Pi in a couple hours and it has been running for a couple weeks without any major incidents. It won’t be replacing any of my servers, but I will be using it to run some VMs. In the future I would like to attach a 2.5Gb NIC and a NVMe to it.

ESXi on ARM is an interesting proposal for VMware and it will be interesting to see what use cases arise for it. Right now, the VMware community is talking about using ARM powered devices for everything from IoT and NICs to home labs. Another interesting question is around how VMware will license it, as it will be tough to charge full price for an ESXi license for a sub $100 device.

For me, the most important thing is that I was able to use ControlUp to monitor and manage my Raspberry Pi and the VMs running on it without any issues. If low-priced ARM devices do proliferate in the datacenter there could be hundreds (if not thousands) of them that need to be managed. ControlUp’s background in monitoring and troubleshooting a large number of objects will be a huge boon to those that are in charge of the care and feeding of them.